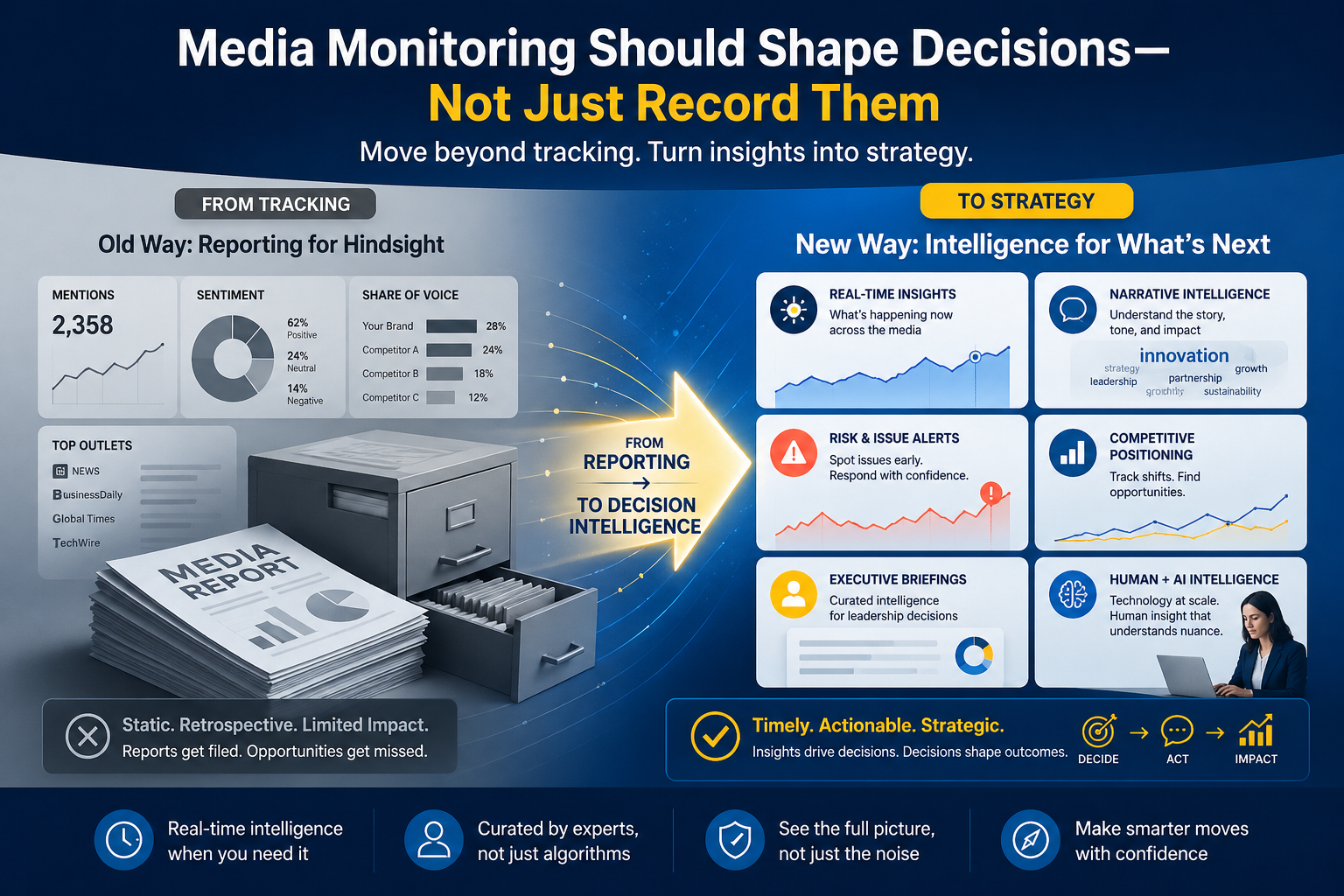

You have placed coverage in a strong outlet. The journalist wrote a solid story. And now you are wondering whether anyone actually sees it, including the AI engines that are increasingly shaping what audiences find.

That question matters more than it did two years ago. AI search engines do not index everything equally. They select, extract, and cite. The articles that make the cut get surfaced in AI-generated responses across ChatGPT, Gemini, Google AI Overview, and similar platforms. The ones that do not, get passed over, regardless of their placement quality.

New research from Fullintel and Dr. Tyler Page from the University of Connecticut analyzed 6,183 URLs across three AI platforms and coded 1,334 news articles to identify which content characteristics predict citation. The clearest pattern: educational content was cited roughly 2.4 times the rate of the next-most-cited article type in the study sample.

That gap is large enough to change how PR teams should think about content strategy.

What Educational Content Actually Means in This Context

The article type categories in this research were coded by trained researchers who reviewed the full text of each article. Educational content refers to articles that explain, teach, compare, or directly answer a question. This is distinct from coverage that primarily announces news, reacts to an event, or aggregates multiple topics.

In the cited sample of 1,334 articles, 484 were educational. The next largest category, answer-style articles, accounted for 206. Comparison pieces came in at 128. Feature articles and government sources followed. The dominance of educational content is not coincidental. It reflects how AI retrieval systems are designed to function.

The Retrieval Logic Behind the Pattern

AI search engines are built to respond to queries. A user asks a question. The system finds content that addresses it, extracts the relevant passage or summary, and synthesizes a response. The sources it cites are the ones it found most useful in completing that task.

An article that explains what GLP-1 medications are and how they work is structurally easier to use as a cited source than an article reporting that a pharmaceutical company announced record sales. Both might appear in the same publication on the same day. One is an answer asset. One is a news asset. AI engines favor the answer asset.

The research team described this as content that behaves like an answer asset: easy to extract, easy to summarize, easy to trust. Those three properties align directly with what retrieval systems need to do their job.

Answer-Style Articles Appear Across the Amplification Models

The research tested two distinct outcome variables beyond simple citation entry. The first was AI Responses: how often an article appeared in AI-generated answers. The second was Citation Consistency: how reliably it was reused across multiple prompts and platforms.

Answer-style articles were the strongest predictor in both models. In the AI Responses model, answer-style content had a beta coefficient of .136 (p < .001). In the Influence Score model, the same content type showed a beta of .116 (p < .001). These are the highest coefficients reported across all variables tested.

Comparison articles also showed a significant positive association with AI Responses (beta = .083, p < .05). Content structured around comparing options, explaining differences, or evaluating alternatives performs well because it directly serves query-matching logic.

What This Means for Media Relations

Most media relations work is built around the news hook: what is new, what changed, what was announced. That logic aligns well with traditional editorial selection criteria. It does not translate directly into AI citation behavior.

This does not mean abandoning news-driven pitching. It means adding a second lens to the earned media strategy. When you are seeking coverage that will be durable, that will surface in AI-generated responses weeks or months after publication, the pitch angle matters.

An announcement pitch might generate coverage for one day. A pitch that helps a journalist write an educational explainer, a how-it-works piece, or a comparison of approaches creates an article that retrieval systems can use as a source for a much longer window of time.

Applying the Finding to Content Planning

Three questions help identify whether planned content or a media pitch has the structural characteristics associated with higher AI citation rates.

First: Does this content answer a specific question? Not a general topic area, but an actual question someone would type into a search interface. If you cannot identify the question, the content is probably structured as an announcement rather than an answer.

Second: Is the content self-contained? Articles that require readers to click away to understand the point behave more like aggregators than answer assets. AI systems prefer contained articles that provide the information directly.

Third: Can a single paragraph from this content stand alone as a useful answer? If an AI engine pulled one excerpt from the article to cite in a response, would that excerpt make sense on its own? Answer assets are built for extraction. Content that only works when read in full is harder to use.

The Relationship Between GEO and Earned Media

Generative engine optimization, or GEO, has emerged as a distinct discipline within digital strategy. Much of the early GEO conversation has focused on owned content: blog posts, FAQ pages, and structured data on brand websites. The Fullintel research suggests that earned media plays a significant role in AI citation behavior, and that PR measurement frameworks need to account for it.

Coverage in high-authority publications that is structured as educational or answer-style content is already being cited in AI responses. Whether PR teams are tracking that citation behavior is a separate question. Most are not yet.

The measurement gap matters. If you do not know which of your placements are generating AI citations, you cannot optimize for that outcome. Fullintel’s strategic media analysis team provides the analytical layer to make that picture visible.

One Shift Worth Making Now

Review the last 10 pieces of coverage your team generated or placed. Classify each one: is it primarily an announcement, a reaction, a roundup, or an educational explanation?

For the announcements and reactions, ask whether there was an opportunity to pitch a related educational angle alongside the news hook. Most product launches, executive appointments, or research releases have an explainer angle. The data suggests that intentionally pursued explainer coverage is what earns AI citations.

Fullintel tracks coverage across hundreds of thousands of sources and works with communications teams to identify which earned media is generating meaningful signals, including AI citation patterns. If you want to understand how your current coverage portfolio performs against these characteristics, our media intelligence team can help you build that picture.